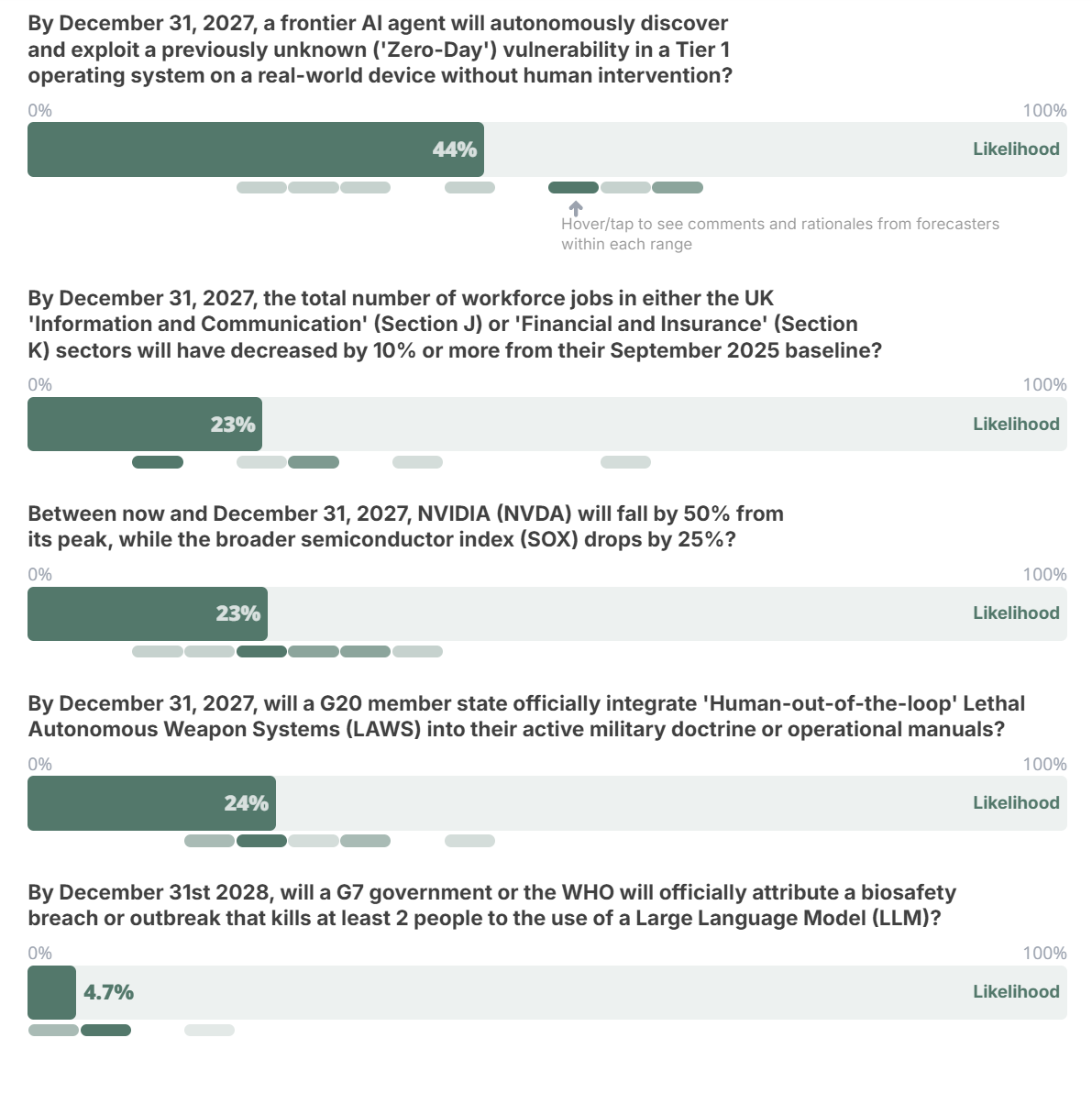

Bridge the Gap: Forecasts for AI Policy

In early February, the Swift Centre launched an open competition to bridge the persistent gap between AI forecasting and government action. Our goal was simple but ambitious: to support a new cohort of advisors in using rigorous, probabilistic data to underpin high-stakes policy advice for political leaders.

Our forecasts provide insight into the likelihood of upcoming events. But we know that the hardest part of AI policy isn't just acknowledging a distant threat - it’s deciding what can or should actually be done about it today. That is where the policy advice will come in.

This post provides the five core forecasts that our professional team has developed for this competition. From the potential hollowing out of the UK’s financial workforce to the shifting norms of autonomous warfare, these forecasts provide the foundation for the policy options that participants will develop.

If you want to take part but haven’t yet registered, fill in this form before the 20th March.

Question 1

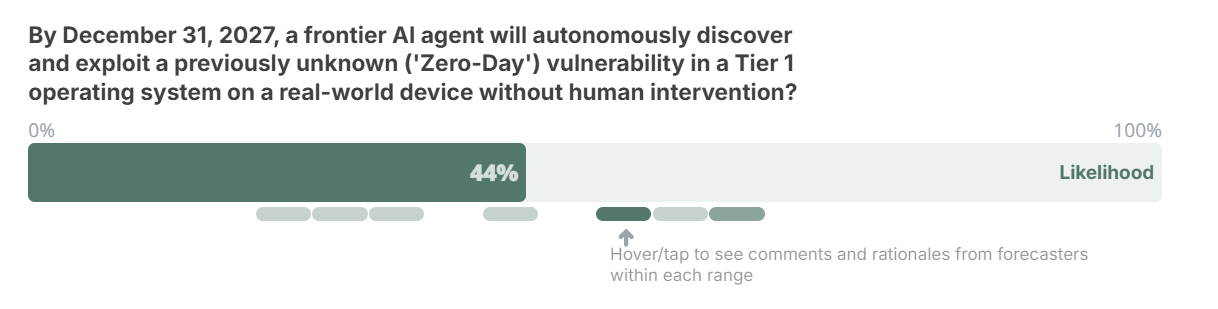

By December 31, 2027, a frontier AI agent will autonomously discover and exploit a previously unknown (“Zero-Day”) vulnerability in a Tier 1 operating system on a real-world device without human intervention?

Question background (full resolution criteria is at the end of this document)

The rapid, highly publicized advances in agentic AI have inevitably prompted debate about the potential cybersecurity implications – for both the “offensive” and “defensive” sides of the ledger – of AI agents capable of acting independently in global IT networks. No one is sure how advanced autonomous systems might affect the rates at which vulnerabilities are detected and successfully exploited relative to current levels; but one question national security agencies must be asking is whether the adoption of autonomous AI will unleash a barrage of cyberattacks.

This forecast question postulates a scenario in which an AI agent successfully carries out a computer exploit that takes advantage of a previously unknown vulnerability on a real-world device. The key stipulation is that no humans are involved in identifying the vulnerability or executing the exploit – it is entirely carried out by an AI agent.

How likely is such an exploit to be achieved by the end of next year, and how might policymakers affect these odds?

The Swift Centre’s forecast

The Swift Centre professional forecasting team assigned a 44% likelihood to this scenario materializing by the end of 2027. The distribution of forecasts ranged fairly closely and symmetrically around the median (the lowest was 20%, the highest 63%).

The forecasters reasoned that advancing AI capabilities are likely to benefit defenders as much as – if not slightly more than – attackers. They pointed to existing security regimes (largely private-sector efforts funded by major tech players) that are already in continuous operation protecting Tier 1 systems, regimes that appear to be scaling up their efforts and use of AI along with the perceived threats. Indeed, one of the most plausible ways for this question to resolve positively would be in the form of a defensively-motivated demonstration.

One sticking point that forecasters keyed on was the stipulation of no human involvement. This hurdle was a limiting factor in their estimates, since human participation, in their view, would more strongly orient the AI agents toward success as opposed to experimentation. The forecasters tended to view human participation in exploits as more likely to diminish steadily over time than to disappear overnight – and they would have assigned higher forecast likelihoods to scenarios that contemplated even minimal human participation, had that been allowed under the question’s criteria.

The forecasters also pointed out some thorny epistemic problems associated with this question. Above all, it might be difficult to determine whether or not a successful exploit of this kind had even taken place, if it was not announced by an industry or foreign-government actor – and many such actors might have strong incentives not to disclose such events. Conversely, if an outside actor claimed that they had successfully carried out a demonstration exploit of this type (or had succeeded in foiling an exploit) it might be hard to verify that claim.

Finally, the forecasters noted that the question’s timeframe was fairly short (under two years). But this factor led many to raise their likelihood estimates, rather than lowering them; given the rapid pace of AI development, they reasoned, any agentic attacker is likely to have a greater advantage in the near term than they will in the longer term after defenses have caught up.

Reasons increasing the likelihood of this occurring:

The rapid progress of AI likely gives “offense” the edge in the near term.

Fully unleashed AI agents could be relentless attackers, launching so many attempts in so many different ways that the chances of at least one succeeding goes up.

Industry players might have incentives to conduct and announce these kinds of exploits as demonstrations.

Some governments have been slow to mandate safeguards on AI systems.

“I think we’re more likely than not to see such an attack by the end of 2027 because there will probably be so many such attempts that at least one will likely succeed as AI-enabled exploits explode in number and diversity.” (51% likelihood)

“Progress is rapid and the US government’s disdain for putting safeguards on AI systems and encouraging Kill Chain utilization point towards a positive resolution.” (63% likelihood)

Reasons decreasing the likelihood of this occurring:

Existing, well-funded systems are already monitoring threats continuously and are adopting AI tools themselves; continued evolution could spawn a virtuous cycle of security improvement.

Human involvement in successful exploits is still inherently likely – if potentially diminishing – over the question time frame; the question stipulates end-to-end success with no human participation.

“There is zero doubt that many hundreds of outlets run zero-day discovery efforts 24/7/365 – on *both* [the] offensive and defensive sides. Microsoft and Apple actively pay for such discoveries. On net, there is probably a parity or only a small advantage to one of the sides.” (25% likelihood)

“The requirement here is an unprecedented end-to-end success without human intervention that is *also* confirmed/documented. With this few workable discoveries, I just don’t see a real incentive to omit humans from the loop. Does not make a lot of sense in terms of reliability. Not in the next two years anyway.” (25% likelihood)

Question 2

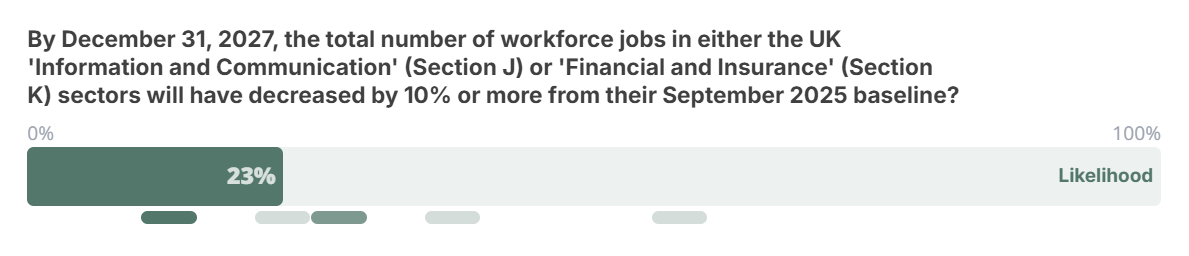

By December 31, 2027, the total number of workforce jobs in either the UK “Information and Communication” (Section J) or “Financial and Insurance” (Section K) sectors will have decreased by 10% or more from their September 2025 baseline?

Question background (full resolution criteria is at the end of this document)

Workforces in the “Information and Communication” and “Financial and Insurance” industries in the UK have not exhibited precipitous swings over the past thirty years. Information and Communication jobs have grown at a fairly uniform pace from 800,000 in 1996 to some 1.6 million today, with some modest downturns along the way, while Financial and Insurance employment has held steady at 1.1-1.2 million. Even through major economic shocks, these sectors have avoided the swings seen in manufacturing or retail.

However, many observers are highlighting the risk of AI-driven job losses across the spectrum of employment, with specific concerns naturally attached to industries where it is easiest to imagine AI supplanting human workers. These two sectors are now the twin poles of the AI displacement debate:

Information and Communication is exposed due to the collapse in cost for natural language and code generation.

Finance and Insurance is vulnerable to the rise of agentic AI capable of automating the “reasoning loops” involved in compliance, auditing, and administrative analysis.

Recent research from the UK’s AI and the Future of Work Unit (published in early 2026) has begun to quantify this. Their pilot studies suggest that while mass unemployment hasn’t hit the aggregate stats yet, we are already seeing a silent hollowing out of entry-level roles. Their data indicates that AI-exposed occupations saw a 13% drop in early-career hiring in late 2025, a potential leading indicator that the total headcount may finally be ready to break its 30-year trend.

This question postulates that by the end of next year, one or the other of these workforces will undergo a sharper contraction than any that has been recorded over the past thirty years. While the Financial and Insurance workforce contracted by 10% at least once in this period, it did not happen as rapidly as this question envisages, and the Information and Communication workforce has not experienced a contraction of more than 6.7%. Importantly, because organisations are not required to cite specific causes/reasons when reporting redundancies, the question does not stipulate that the contractions must be only due to AI adoption. Nevertheless, given the historic trends in these industries, a drop of 10% in such a short space of time would point to a significant shift in workforce practices – the sort of structural shift which AI advancement and adoption is currently the leading predictor for.

Will the advent of generative AI or other factors cause major disruptions in these industries in the near future, and what stance should policymakers take toward this prospect?

The Swift Centre’s forecast

The Swift Centre forecasters estimated the likelihood of this scenario materializing in the specified time frame at 23%. The bulk of the forecasters landed between 10% and 30%, with two outliers at 35% and 55%.

The forecasters saw the persistent uncertainties about the uptake, use-cases, and effects of generative AI in these industries as high enough that an essentially unprecedented employment disruption was more unlikely than likely in this relatively short time window. That said, the very same uncertainties about the near-term shape of AI uptake ensure that the chance is non-trivial, and no forecaster assigned a likelihood below 10%. Probabilistically, also, the fact that the question requires only a qualifying shift in either of these two AI-vulnerable sectors supports the odds of it resolving positively.

Reasons increasing the likelihood of this occurring:

Workforce reductions on this scale could result from non-AI-centred factors alone – most plausibly a significant economic downturn or financial crisis (though such events might affect the two industries unevenly). The base-rate likelihood of a recession in the UK within the next two years might be about 20%.

Institutions could reduce headcount for purely fiscal reasons while publicly blaming AI for the layoffs (“AI-washing”).

Even if AI use results in only modest productivity improvements, major job cuts could still ensue if AI adoption does not also stimulate demand through lower prices.

Workers in the Information and Communication sector appear inherently more vulnerable to job replacement by LLM-based agents (though the time frame is very short for an industry to change course on this scale).

In the Financial and Insurance sector, the high incidence of “robotic” tasks (and strict attention to costs) could make employers more receptive to early adoption of customized, efficiency-enhancing AI tools.

“[T]his question has more chances to resolve from a financial crisis than from an AI-related crisis….I think it’s pretty possible that a crisis, even a small/short term one, may be used as a perfect opportunity to remove some people.” (28% likelihood)

“10% also seems plausible if humans remain very much needed but AI increases productivity substantially without also stimulating demand through higher competitiveness – something that is possible even with current AI capabilities being increasingly adopted.” (55% likelihood)

Reasons decreasing the likelihood of this occurring:

These would be “calamity-level” contractions if they unfolded in less than two years. While workforce contractions on this scale have occurred at least once (in the financial sector) over the past three decades, they have never occurred over such a short period.

It remains far from settled how much productivity improvement current LLMs will generate and how much human capacity can be substituted for, and in this contest uncertainty slightly favours the status quo.

Human institutions will likely be slow to adopt and adapt to AI. Even if the AI-driven productivity increases prove to be radical, employers are unlikely to adapt to them rapidly enough for steep job cuts to materialize in the stipulated time frame, absent a catalysing economic crisis.

The recently adopted labour reform legislation in the UK could slow layoffs in the near term, even if it results in smaller workforces over the long term.

The Information and Communication workforce, though potentially very vulnerable to AI substitution, is unlikely to contract as quickly as this question stipulates.

The fundamentally risk-averse nature of the Financial and Insurance industry could act as a brake on the resizing of its workforce.

“There has never been a fall this big, ever. Didn’t happen in either of the indexes during the financial crisis, nor at any other time. So this is a huge, unprecedented collapse…. I think the rate is much more important for forecasting here than the absolute levels.” (10% likelihood)

“Financial services companies are hugely risk-averse, given [that] one operational control error can result in bankruptcy. [M]y theory is we will not see massive layoffs until sometime after 2029 to 2030, as industries become comfortable with new AI-driven systems…. Major transformation will most likely come from brand-new companies that are built around AIs from the start, and that will take many more years of trial and error to get things stable and reliable.” (14% likelihood)

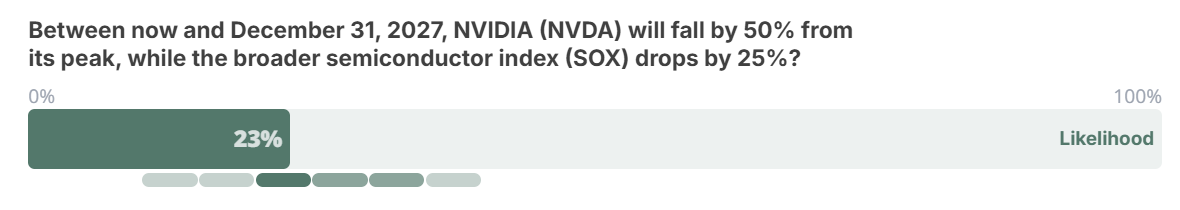

Question 3

Between now and December 31, 2027, NVIDIA (NVDA) will fall by 50% from its peak, while the broader semiconductor index (SOX) drops by 25%?

Question background (full resolution criteria is at the end of this document)

What is the likelihood that, over the next two years, the stock of the world’s most valuable company by market capitalization, NVIDIA, will lose half of its value at the same time as the main semiconductor stock index to which it belongs, SOX, loses a quarter of its value?

On the most basic level, this question can be seen as a referendum on the future course of the AI boom, with the stock most closely associated with that boom – NVDA – serving as a proxy. The most salient set of questions is whether the AI industry is in a “bubble”, as is often alleged, and if so, how likely it is that a significant correction will occur within this time period. The SOX criterion adds a second dimension that connects the AI economy with the broader stability of financial markets, thus inviting us to also carefully consider non-market events that could affect this nexus of equities.

From a strictly historical, securities-based perspective, the fundamentals point toward this scenario being quite plausible. Equities as a rule tend to be volatile securities. NVDA itself exhibits considerable smaller-scale volatility today, fuelled by its skyrocketing valuation, and has a history of more severe volatility too: the stock has dropped by 50% four times over the course of the past twenty-five years. And the SOX index also fell by 25% during each of these episodes.

Yet most of that historical period predates the AI boom and the corresponding ascent in NVIDIA’s market capitalization. No company that was number-one in market cap for at least two quarters in a year has ever lost 50% of its equity value in the subsequent two years. In other words, NVIDIA might be “too big to fail” in the absence of the AI bubble bursting calamitously or truly market-shaking outside events.

How closely will NVIDIA’s fortunes mirror the near-term course of the AI boom, and how much should policymakers view the stock as a first-level indicator of overall market stability?

The Swift Centre’s forecast

The Swift Centre forecasting team estimated the likelihood of this question resolving positively at 23%. Their forecasts were fairly tightly clustered around the median, with some outliers tilting to the high side (range 11% to 37%).

In light of the security’s fundamental characteristics, as noted above, the forecasters saw the minimum likelihood of a 50% drop in NVDA’s valuation at one-in-ten, and the base-rate likelihood of such a drop at 35%-35% considering the last ten years and current option prices. NVDA and the SOX index were seen as likely to be correlated enough in most circumstances, if not all, that they can be considered as a unit.

At the same time, the forecasters were generally sceptical of the theory that the AI market is in a bubble – at least one that is likely to pop in dramatic enough fashion to decimate NVDA’s value (as opposed to a smaller-scale correction that would not bring AI development to a halt or hobble financial markets as a whole) and that is likely enough to occur within the two-year time frame. They tended to see demand for NVDA’s chips, and for semiconductors more broadly, remaining sufficiently robust in most scenarios – a fundamental support for the company’s valuation and that of the index.

Major non-market events were seen as the most plausible causes of a qualifying correction in NVDA and SOX. The most obvious candidates were a China-Taiwan conflict that would disrupt the global semiconductor chain – a non-trivial risk, in the forecasters’ view – and a global recession that would slow economies everywhere. But these factors were more than counterbalanced by a general opinion that a severe AI winter is, in itself, comparatively unlikely. Together, these internal and exogenous factors combined to pull down the median forecast from the assumed base rate to the final 23%.

Reasons increasing the likelihood of this occurring:

NVDA is a highly priced stock by fundamental measures, making it more susceptible to volatility, and two years is a long sample time when it comes to stock price fluctuations.

The correlation between NVDA and SOX has historically been tight, and it makes intuitive sense to assume that it will continue.

The AI boom is showing signs of being a bubble on many criteria.

There is a non-trivial risk of China invading Taiwan before the end of 2027.

“This has happened before several times. Nvidia is a huge and highly valuable company on a parabolic trajectory. Not unusual for such companies to drop by 50%.” [15% likelihood]

“For me, the main question here is if there is indeed an AI bubble or not, and, if yes, whether it will burst or not in the given time period. If such a thing happens, I think there is a good chance (~70%-80%) that the question will resolve positively…. [A]according to economists Brent Goldfarb and David A. Kirsch, who literally wrote the book on the subject (bubbles) in 2019, AI has all the hallmarks of a bubble (an 8 on a 0-to-8 scale).” (30% likelihood)

“The base rate for Nvidia’s price falling by 50% in the space of around 1 year and 10 months while the semiconductor index drops by 25% in the same period, over the past decade, is about 35%-40%. A possible invasion of Taiwan by the end of 2027 or the possibility that there is an AI bubble that’s about to burst might push us higher.” (37% likelihood)

Reasons decreasing the likelihood of this occurring:

There are no historical instances of companies with number-one market capitalizations suffering a correction on this scale at this rate.

The correlation between NVDA and SOX, while likely robust, is not obviously unbreakable. NVDA’s share in the SOX is limited to 12% by rule, and semiconductor supply at present is sufficiently constrained that it is possible to imagine an AI-and-NVDA-specific downturn leaving other semi producers in a comparatively strong position.

The most plausible drivers of a correction on this scale are major exogenous events (China attacking Taiwan, a global recession) or atypical endogenous events (scandal or leadership change at NVDA) – all of which have fairly low, though not negligible, baseline likelihoods.

“‘How many times [has] a company that is #1 in market cap during the year X (>2 quarters in that year) lost 50% within X+2 years?’ The answer: it has never happened. It almost happened to MSFT in 2002 and can still happen to AAPL, MSFT or NVDA. But that’s it – a base rate of zero for now.” (11% likelihood)

“The AI bubble question is a big one, and I’m personally sceptical that we are in a bubble – forward P/E ratios don’t look too insane, and [my] guess [is that] a lot of the risk isn’t in semis themselves but in the more wild AI infra plays.” (24% likelihood)

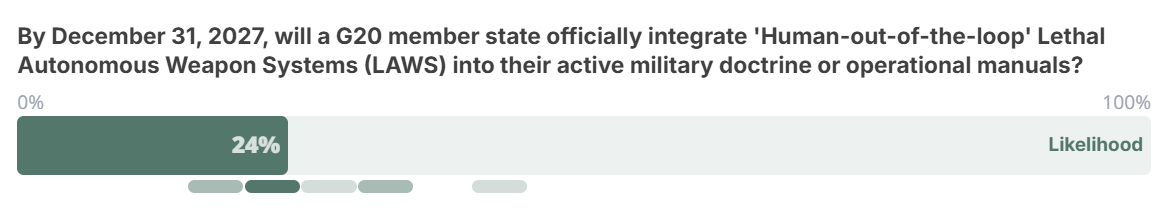

Question 4

By December 31, 2027, will a G20 member state officially integrate “Human-out-of-the-loop” Lethal Autonomous Weapon Systems (LAWS) into their active military doctrine or operational manuals?

Question background (full resolution criteria is at the end of this document)

The same few years that saw the boom in generative AI also saw drone warfare evolve into a key dimension of a full-scale conflict between Russia and Ukraine. The prospect of fully autonomous weapons systems (LAWS), and decisions on the extent to which they should be developed, deployed, and acknowledged, is confronting uniformed and civilian military planners across the developed world, and such systems may soon become available to less-developed nations as well.

This question has already broken into the public domain with the recent public standoff between Anthropic and the US Department of War. Secretary Pete Hegseth issued an explicit request for Anthropic to remove safety guardrails that prevent Claude from being used in fully autonomous lethal systems. Anthropic CEO Dario Amodei refused, stating that today’s AI is “not reliable enough to power fully autonomous weapons” and that doing so would put American warfighters and civilians at risk. Hours later the Pentagon signed a substitute agreement with OpenAI that seemingly retained the guardrails that had been at issue in the agreement with Anthropic.

This dispute highlights the “Rubicon” of military doctrine. While many G20 states, including the UK and US, have historically maintained a meaningful “human control” requirement, the pressure to drop such restrictions is mounting. The Pentagon’s move to designate Anthropic a “supply-chain risk” in retaliation for its refusal – an aggressive step that could have a destructive impact on the company’s business – suggests that the “human-in-the-loop” policy is increasingly viewed as an operational bottleneck.

The Swift Centre forecasts summarized here predated these dramatic events by a few days, but we believe that the fundamentals of our forecasts hold.

The forecasting question folds any debate over the technical viability of LAWS into the broader issue of their official adoption by militaries among the G20 countries. An important stipulation of the question is not just that the technology exists, but that it become an explicit element of a G20 country’s military power – thus the resolution criteria require that autonomous systems be not only developed or potentially covertly deployed but be openly integrated into a country’s military doctrine.

A country’s willingness to take this last step will obviously be influenced by its specific capabilities, its geostrategic situation, and its domestic politics and public opinion.

Are advanced militaries on the cusp of openly embracing autonomously operated weapons systems, and what role should policymakers play in the inevitable public debate over their adoption?

The Swift Centre’s forecast

The Swift Centre forecasters pegged the likelihood of a positive resolution of this question at 24%. The forecasts were fairly tightly bound around the median, ranging from 15% to 45%, with no conspicuous outliers.

The forecasters focused their analysis on the three main states they believed capable of deploying LAWS at scale within the stated timeframe: the US, China, and Russia. On technical viability the team was bullish, estimating that some forms of LAWS would likely be viable for these three countries in the near future – if they are not already. In addition, smaller G20 militaries could have their own strong strategic incentives to adopt LAWS soon, but they may lack the capacity to do so within the question’s timeframe.

But the forecasters also saw three significant obstacles to positive question fulfilment:

the typically slow pace of testing and adoption of new technologies by modern militaries, in the absence of exigent circumstances such as those created by an actual conflict;

the likely absence of an actual conflict involving these three nations within the next two years that could trigger a more rapid adoption of LAWS (the Russia-Ukraine conflict notwithstanding); and

the various political, strategic and legal disincentives for nations to explicitly acknowledge their adoption of LAWS in published military doctrine.

Among these obstacles, official acknowledgement was clearly the key sticking point for the forecasters. They saw very few incentives for any nation to explicitly endorse its adoption of autonomous weapons within the next two years, but they saw numerous disincentives, such as inevitable public blowback, geopolitical retaliation, and potential legal liability. They also noted that maintaining “strategic ambiguity” about military doctrine while LAWS are being developed covertly could be perceived by leaders as very advantageous in the current geostrategic situation – particularly for China, which might be seeking to hedge against US development of these capabilities.

Even without any historical base rate to start from, the forecasters converged around a likelihood of slightly over one-in-five. The odds were not even lower because, on the one hand, the weapons technologies themselves are likely to be imminently available, and on the other hand, there is a sufficiently non-trivial chance of a sufficiently serious military conflict erupting that could spur the combatants to use LAWS and change the incentives around their explicit adoption. Of the three key countries, Russia was viewed as the most likely to be the first to officially incorporate LAWS into its doctrine, the US (at least under the current administration) as the second most likely, and China (which seems to benefit the most from “strategic ambiguity”) as the least likely.

Reasons increasing the likelihood of this occurring:

Initial forms of these weapons are likely to be technologically viable soon, if they are not already, and they promise to be very useful to militaries.

Within the two-year time frame, the odds of a new conflict starting that might trigger a country to declare its adoption of LAWS are not negligible (e.g., China and Taiwan).

Russia has already made statements that at least hint at an acceptance of autonomous weaponry. The US seems increasingly willing to flout longstanding norms in the military space.

“[I have] no doubt that everyone capable of building such systems is developing them – if only out of fear to be left behind. I’d give 95% that something that would satisfy resolution criteria here will be ~fully functional 22 months from now.” (20% likelihood)

“These tools are going to be too useful not to be widespread.” (33% likelihood)

“Russia and China are the most likely candidates. Russia’s CCW GGE 2024 paper hints at being accepting of autonomy: ‘We consider it inappropriate to introduce the concepts of “meaningful human control” and “form and degree of human involvement” promoted by individual States into the discussion, since such categories have no general relation to law and lead only to the politicization of discussions.’” (20% likelihood)

“The recent fight between Anthropic and Hegseth’s DOD/DOW showed a maximalist position from the US to not only threaten to drop contracts with an AI company that does not comply with all requests, but to threaten to drop any company that uses their technology.” (29% likelihood)

Reasons decreasing the likelihood of this occurring:

In the current state of play, substantial disincentives still discourage governments from publicly acknowledging that their militaries are incorporating LAWS – reputational cost, public backlash, legal vulnerability.

Historically, militaries have been slow to test and incorporate new weapons, let alone radically new forms of weaponry, into their doctrines and manuals, especially in peacetime. Testing alone is likely to take a while – and there is reason to believe that autonomous weapons will require more testing rather than less.

Over the next 22 months, the overall odds are against the commencement of a military conflict severe enough to motivate one of the major players to reconsider the disincentives to formal LAWS adoption.

To varying degrees among the G20 members (e.g., more for China, less for Russia), there are strategic advantages to maintaining an ambiguous posture on LAWS for now.

“Using such weapons (provided that they are available) is one thing; officially saying that their use becomes fair game and standard is quite another thing, with no clear upsides (and lots of downsides).... I cannot see why anyone would be willing to do such a (still largely controversial) thing in the given time frame (less than 2 years).” (15% likelihood)

“Is this enough time to fully test and officially incorporate such systems into military forces? I am not at all sure but lean toward “no more than 50% chance”. When I read about, for example, the timelines of major weapon systems (jets, hypersonic weapons, new types of missiles), those are counted in ~decades.” (20% likelihood)

“China prefers the strategic ambiguity of advocating against the use of human-out-of-the-loop LAWS while pursuing their development, in part to hedge against the perceived threat that the US will develop such weapons systems. ” (20% likelihood)

“I do not know of any system that has been developed and is in testing that is capable of this level of full autonomy. Further, I remain confident that western countries would be extremely reluctant to make such systems part of their formal military doctrine, short of the expediencies of warfare. It is quite possible that Russia or China has such a system and perhaps even has it ready for deployment. But in both cases, they would be unlikely to formally announce such a system as part of their ongoing military doctrine. The political blow-back from that announcement would be excessive.” (31% likelihood)

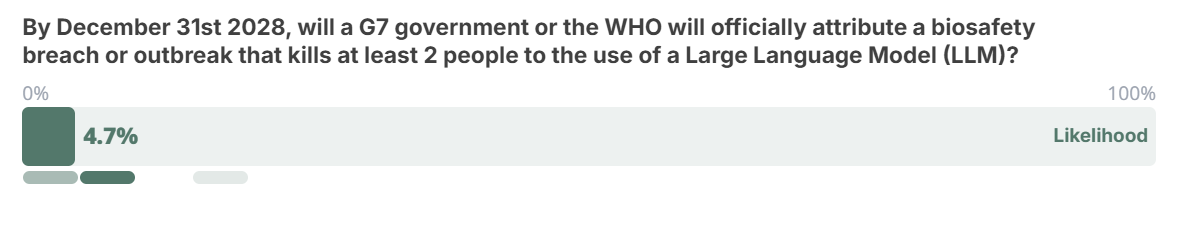

Question 5

By December 31st 2028, will a G7 government or the WHO officially attribute a biosafety breach or an outbreak that kills at least 2 people to the use of a Large Language Model (LLM)?

Question background (full resolution criteria is at the end of this document)

The emergence of generative AI seems bound to further complicate the already formidable task of detecting, tracking, attributing, and anticipating biosafety and bioterrorism risks. These risks get outsized media attention – fuelled by public anxiety related to terrorism, institutional accountability, the COVID pandemic experience, and disruptive technological change – even though biosafety incidents that cause substantial casualties seem to have been historically rare (or perhaps indeed for that very reason).

This forecasting question zeroes in on the intersection of LLM adoption and biosafety management, postulating not only a breach or outbreak but that the incident be officially attributed to the use of LLMs. The question thus directs attention to the process and motivations behind official attributions in the aftermath of such incidents.

The question’s scope is wide enough to cover both accident scenarios (such as a biolab leak) and bioterrorist attacks. On a strict reading it might be interpreted to include naturally arising pandemics as well, but the lack of any plausible link between LLMs and naturally originating disease outbreaks effectively leaves such pandemics outside the question scope.

Taking into account that the question criteria require fatalities as well as certain and official attribution, the pre-AI base rates of qualifying events are undoubtedly low. There has been one universally accepted qualifying event in the last 50 years. Bioterrorist attacks have not had high fatality rates. Biosafety breaches are probably more common than is generally known, but even when they are detected they have rarely resulted in fatalities.

Could public access to LLMs make incidents of this type more frequent, more dangerous, or both, and should policymakers channel significant additional resources towards heading off this risk?

The Swift Centre’s forecast

The Swift Centre team estimated that there was a 4.7% likelihood of an incident satisfying the question criteria occurring before the end of 2028. Individual estimates were almost uniformly under 10%, with one high outlier at 15%.

The team found that this scenario is unlikely to materialize largely because of its restrictive criteria – the stipulations of at least two fatalities, official attribution, and meaningful involvement of LLMs, all to occur within a three-year time window. They explicitly assumed that for an incident to be plausibly attributable to LLM use, the role that AI was alleged to play would have to be “significant”, not marginal.

On the bioterrorism side, the forecasters believe that the introduction of LLMs is unlikely to have a major impact, at least within the question’s time frame. The major barrier to most prospective DIY bioterrorists is a lack of access to facilities, materials, and practical expertise. What LLMs are best at is making specialized knowledge more accessible, but the information that one would need to engineer a biothreat – at least in principle – is already fairly easy to find online. Meanwhile, in their current forms, LLMs are unlikely to meaningfully expedite the tasks of procuring restricted materials and gaining access to specialized facilities.

The most plausible kind of bioterrorist attack that would meet the question’s criteria might be the dissemination of lower-level, less lethal common pathogens or toxins at a large scale – an attack such as a mass food or water poisoning. The relevant kinds of bioagents (salmonella, for example) would not be very lethal on a small scale, but if disseminated widely enough their impact could pass the bar of two fatalities. LLMs might be more helpful in engineering such an attack, because the materials and techniques needed are less specialized (an LLM could conceivably give a malicious actor the idea in the first place.)

On the biosafety side, the forecasters were hard pressed to imagine scenarios in which LLMs would be substantially implicated in an accident such as a lab leak in the question’s timeframe. While LLMs are likely to be increasingly used in lab research activities, they are much less likely to be integrated into lab safety protocols, and these protocols have themselves improved in recent years.

The question’s stipulation that an incident be announced and officially attributed to LLMs brought the team’s estimate down further. Either sort of incident would have to be detected, investigated and its causes ascertained before any attribution could be even considered. The perpetual controversy over the COVID lab-leak hypothesis demonstrates how fraught (and prolonged) such investigations can be.

Even assuming that an investigation definitively establishes an incident’s cause, authorities might face conflicting incentives when deciding how, or even whether, to make it public. On the one hand, fear of reputational damage might be a strong incentive for authorities not to publicize a small-scale bioterror attack or lab leak, or to delay any reporting as long as possible. On the other hand, a plausible attribution to LLMs – even a concocted one – might provide political actors with a convenient scapegoat. In the current climate, opportunistic leaders might be very happy to blame LLMs for a biosecurity failure.

Reasons increasing the likelihood of this occurring:

Biosafety breaches are more common than is generally known.

LLMs could plausibly help terrorists ideate and execute an attack that would spread common, less-lethal pathogens or toxins to a large population through food or water systems.

Given public anxiety about AI, politicians might have an incentive to attribute incidents to LLMs, whether or not the models contributed significantly (or at all) to causing the event.

“If there is a biosafety breach or an infectious disease outbreak, a large language model is quite likely to have been involved in the incident at this point (because LLMs are increasingly being used in a variety of domains), but I doubt that it will be identified as having provided ‘significant uplift’ (or anything equivalent). The most likely reason this would happen is if a government or the WHO wants to sensationalize the incident.” (8% likelihood)

“LLMs probably aren’t needed for trivial ways of causing an outbreak that results in at least 2 fatalities, and tacit and local knowledge is still going to be needed for the more complicated methods. However, it’s possible that LLMs give novices simple ideas which they wouldn’t otherwise have had, and the mortality threshold here is very low.” (8% likelihood)

“The ‘significant uplift’ may be tricky, but is a lot easier to reach than the criteria of full autonomy we have for other questions…. If this was about an actual infection, I’d be lower, but food or water poisoning seems easier to achieve.” (15% likelihood)

Reasons decreasing the likelihood of this occurring:

Historically, biosafety breaches have rarely been proven to result in fatalities.

Definitive attribution to any source can take a long time after biosecurity incidents – potentially much longer than the question’s timeframe.

LLMs are likely to be increasingly incorporated into microbiological research activities, but less likely to be incorporated into lab security protocols themselves. Lab safety protocols have generally improved in recent years.

LLMs are unlikely to substantially help terrorists overcome the primary obstacle to mounting a biological attack: a lack of access to necessary materials and facilities.

Authorities have strong incentives to conceal, deny, or at least delay the reporting and attribution of incidents to avoid embarrassment.

“I think this is highly unlikely for a couple of reasons, though I consider AI to be potentially quite useful in conducting such research.…The most important reason is that admission of such a disaster would be incredibly embarrassing. So it is hard for me to imagine any country willingly doing this, unless they were outed by a credible whistle-blower and a new administration saw it as politically advantageous to make such a statement.” (6% likelihood)

“I think if there is some small-scale bioterror incident, LLMs are likely to be involved. If that does happen, I think they may well have provided significant uplift. Even something like a repeat of the 2001 Anthrax attacks (or a successful version of Aum [Shinrikyo]’s attacks) are likely to involve LLMs these days, and I suppose a report would make that clear. However, it’s not clear that they’ll be causally or counterfactually important to the extent that these types of events are much more likely to occur now than they were before.” (8% likelihood)

Summary Graphics

Annex: Questions with resolution criteria

Question 1:

By December 31, 2027, a frontier AI agent will autonomously discover and exploit a previously unknown ('Zero-Day') vulnerability in a Tier 1 operating system on a real-world device without human intervention?

Resolution detail:

The forecast resolves as YES if a verified report (from a National AI Safety Institute (e.g., UK AISI), a G7 cybersecurity agency (e.g., NCSC, CISA), or a major AI company (e.g., OpenAI, Anthropic)) confirms that an AI agent independently completed the following:

Zero-Day Discovery of Tier 1 Target: Identified a vulnerability previously unknown to the software vendor (no CVE assigned at time of discovery) which compromises a current, supported version of Windows, macOS, Linux Kernel, Android, or iOS.

Real-World Device: Executed the exploit on physical hardware (e.g., a laptop or smartphone) rather than a simplified software-only simulation.

Full Administrative Control: Achieved Privilege Escalation (gaining root or SYSTEM access).

Autonomous "Kill Chain": Performed all steps (reconnaissance, discovery, and exploit generation/delivery) without human debugging, sub-prompting, or mid-process guidance. No Mid-Process Intervention: The task fails if there is any "human-in-the-loop" approval at any point after the first prompt.

Resolution Note: Semi-autonomous aids (where humans provide tactical direction or fix AI coding errors) do not count. The agent must operate as a "closed-loop" system.

Question 2:

By December 31, 2027, the total number of workforce jobs in either the UK 'Information and Communication' (Section J) or 'Financial and Insurance' (Section K) sectors will have decreased by 10% or more from their September 2025 baseline?

Resolution detail:

The baseline figures are taken from the ONS December 16, 2025 release, which captures the state of the market from September 2025:

Information & Communication (Section J): 1.602m jobs

Financial & Insurance (Section K): 1.110m jobs

The source data will be:

Primary Source: ONS Dataset JOBS02: Workforce jobs by industry.

Resolution Date: The forecast will resolve based on the March 2028 release (which provides the final Q4 2027 figures).

Data Source: Resolution will use the 'Information and Communication' (Section J) and 'Financial and Insurance' (Section K) figures of the WFJ SA sheet (Seasonally Adjusted).

To resolve as YES, the headcount in at least one of these sectors must fall to or below the following targets by the final 2027 report:

Sector J Target: Equal or less than 1,441,800 jobs (A 10% drop from 1.602m)

Sector K Target: Equal or less than 999,000 jobs (A 10% drop from 1.110m)

Question 3:

Between now and December 31, 2027, NVIDIA (NVDA) will fall by 50% from its peak, while the broader semiconductor index (SOX) drops by 25%?

Resolution detail:

At any point between now and the end of 2027, NVDA's price hits a low that is at least 50% below its highest price in that same window. During that same period when NVIDIA is falling, the Philadelphia Semiconductor Index (SOX) must drop by at least 25% from its own recent high.

Question 4:

By December 31, 2027, will a G20 member state officially integrate 'Human-out-of-the-loop' Lethal Autonomous Weapon Systems (LAWS) into their active military doctrine or operational manuals?

Resolution detail:

Human-out-of-the-loop: A system that selects and engages targets independently based on sensors and algorithms.

Included Systems: Systems that possess "Target Recognition" AI where the machine can identify and make the kill decision based on a human specified objective (e.g. help us weaken 'x' faction in this area).

Excluded Systems: Defensive systems (e.g., Aegis, C-RAM) that are essentially automated shields, or drones that use AI only for flight navigation and terminal guidance toward a human-selected target.

Source: Official publications from a National Defence Ministry (e.g., UK MoD, US Department of War/DoD, Russia's MoD) or any branch of a G20 nation's armed forces (Army, Navy, Air Force).

Document Type: Strategic reviews, field manuals, or official procurement announcements stating that fully autonomous targeting is an authorised operational procedure.

Question 5:

By December 31st 2028, will a G7 government or the WHO will officially attribute a biosafety breach or outbreak that kills at least 2 people to the use of a Large Language Model (LLM)?

Resolution detail:

The forecast resolves as YES if a G7 government (including specialized AI Safety Institutes) or the WHO issues a report identifying an LLM as a "materially contributing factor" to the event.

The Event: Must involve at least 2** human fatalities** resulting from:

Intentional Misuse: Deliberate acts such as intentional food/water poisoning, bioterrorism, or the engineered release of a pathogen.

Accidental Breach: A containment failure or lab-acquired infection where AI-generated protocols were used.

The "Uplift": The report must conclude the AI provided Significant Uplift defined as providing specialized knowledge, technical troubleshooting, or planning that the actor likely could or would not have achieved without the model.